Building an AI Health Pod: Our Architecture -NVIDIA Jetson

How we designed a real-time, multi-modal AI system for clinic-grade health diagnostics at the edge.

The Edge AI Imperative in Healthcare

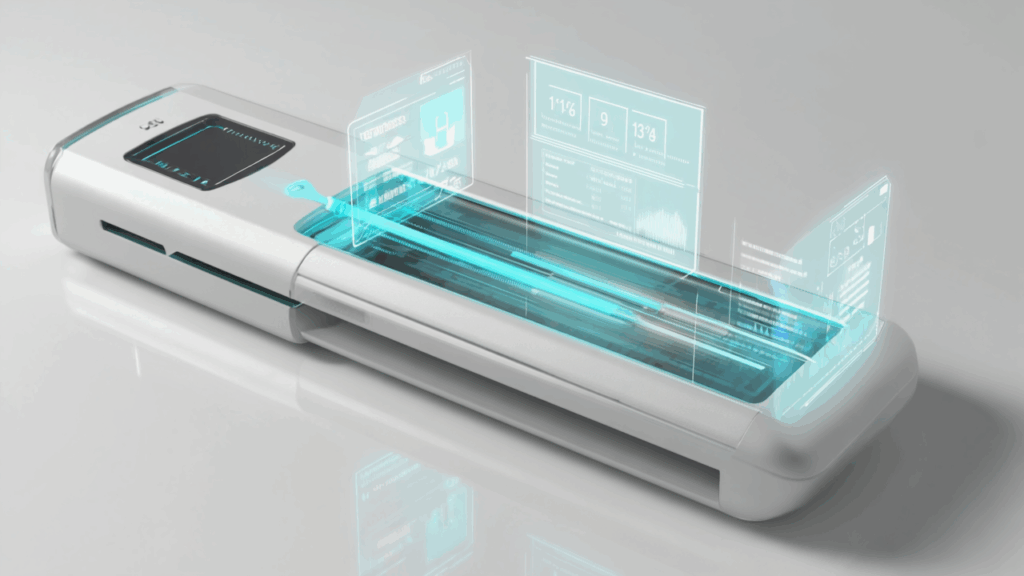

Imagine a device that performs a comprehensive health screening—55+ biomarkers from blood analysis to vision tests—in under 15 minutes. Now imagine it does this not in a cloud-connected data center, but locally, inside a sleek, self-contained pod in a busy airport or a quiet office lobby.

This is the challenge we set for ourselves with AuraPod, an AI-powered health screening kiosk. The core technical constraint was clear: We could not stream high-resolution video, sensitive voice recordings, or personal health data to the cloud. Privacy, latency, and reliability demanded an **edge-first architecture

The heart of this system? The NVIDIA Jetson Orin.

In this article, I’ll break down our edge AI architecture, the challenges of real-time, multi-sensor fusion, and why the Jetson platform was the only choice for a project of this complexity.

Why the NVIDIA Jetson Orin?

For a system that must simultaneously process data from over a dozen sensors, the compute module needs to be more than just powerful; it needs to be efficient, supported by a mature AI software stack, and capable of handling heterogeneous workloads.

Our Key Requirements:

- Real-Time Inference: Process multiple AI models (computer vision, audio, time-series) in parallel with strict latency constraints (<2 seconds for any single metric).

- Power Efficiency: Operate within a thermal envelope suitable for a public kiosk without loud, bulky cooling.

- I/O Bandwidth: Connect a plethora of sensors—multiple USB3 cameras, I2C/SPI sensors, audio arrays, and custom hardware controllers (e.g., for microfluidics).

- Software Ecosystem: Leverage frameworks like TensorRT for optimized model deployment and robust drivers for edge deployment.

The Jetson Orin 32GB module was the perfect fit, offering 275 TOPS of AI performance in a compact, power-efficient form factor.

Our Edge AI Architecture: A Three-Tiered System

Our software architecture on the Jetson is designed around a publisher-subscriber model for flexibility and scalability.

Key Technical Challenges & Solutions

- Managing Multiple Real-Time AI Pipelines

Challenge: Running models for posture estimation (MediaPipe), dipstick color analysis (OpenCV + CNN), voice stress analysis, and vital sign correlation simultaneously without any single process blocking the others.

Solution: We containerized each major AI service using Docker. This provided isolation, simplified dependency management, and allowed us to allocate specific CPU cores and GPU resources to each container based on its needs. Inter-process communication (IPC) between containers is handled through Redis Pub/Sub, acting as a high-speed message bus.

python

# Example Docker command for our CV container

docker run --rm --gpus all --net=host -v /data:/data \

aurapod/cv-pipeline:latest \

--model /data/models/dipstick_trt.engine \

--source /dev/video0

```- Model Optimization with TensorRT

Challenge: Our PyTorch and TensorFlow models were accurate but too slow and memory-intensive for real-time inference on the edge.

Solution: We converted all key models to TensorRT engines. This provided massive speedups through layer fusion, precision calibration (using FP16 and INT8 quantization), and kernel auto-tuning specific to the Jetson’s GPU.

Result: Our posture estimation model ran 3.2x faster after TensorRT conversion, with a negligible accuracy drop (<0.5%).

```python

# Pseudocode for our model optimization pipeline

import tensorrt as trt

# Build a TensorRT engine from an ONNX model

def build_engine(onnx_path, precision=trt.float16):

logger = trt.Logger(trt.Logger.INFO)

builder = trt.Builder(logger)

network = builder.create_network(1 << int(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH))

parser = trt.OnnxParser(network, logger)

# Parse model and set configuration

with open(onnx_path, 'rb') as model:

parser.parse(model.read())

config = builder.create_builder_config()

config.set_flag(trt.BuilderFlag.FP16) # Enable FP16 for speed

# Serialize and save the engine

engine = builder.build_engine(network, config)

with open("model.trt", "wb") as f:

f.write(engine.serialize())

```- Hardware-Software Integration

Challenge: The pod isn’t just sensors and AI—it’s also a physical machine with actuators. We needed a reliable way for the Jetson to command hardware like linear servos (to adjust the screen height) and the UV-C sanitization system.

Solution: We used a Arduino Mega 2560 as a subordinate microcontroller. The Jetson sends high-level commands (e.g., MOVE_SCREEN 1200mm) via a serial USB connection. The Arduino handles the low-level PWM control, sensor feedback loops, and safety checks for all actuators. This offloads real-time control tasks from the Jetson’s OS, ensuring smooth and reliable operation.

The Tangible Benefits of an Edge Architecture

- Privacy by Design: Raw video, audio, and personal health data never leave the pod. Only anonymized, aggregated health insights are transmitted to the cloud for longitudinal tracking and model retraining.

- Sub-Second Latency: Users get immediate feedback. There’s no lag waiting for a cloud round-trip after a blood analysis is complete, which is critical for user trust and experience.

- Offline Operation: The pod remains fully functional even with intermittent internet connectivity, a must-have for deployments in various environments.

- Bandwidth Efficiency: We save terabytes of daily bandwidth that would otherwise be consumed by streaming raw sensor data.

Lessons Learned

Start with TensorRT Early: Don’t treat model optimization as a final step. Model conversion can reveal hidden dependencies and operations unsupported by the edge framework.

Plan Your IPC Early: Choosing the right communication protocol (e.g., Redis, ZMQ, MQTT) between your processes is crucial for system stability and performance.

Embrace Containerization: It is a lifesaver for managing complex software on the edge, making debugging, updates, and scaling significantly easier.

What’s Next?

The Jetson gives us the foundation to build even more intelligent features. Our roadmap includes:

Federated Learning: Using the cloud to aggregate learnings from all pods and push updated model weights back to the edge, improving accuracy without compromising raw data.

Predictive Maintenance: Using onboard AI to analyze sensor health data and predict hardware failures before they happen.

Building AuraPod has been a lesson in the power of edge AI. It’s not just about moving compute to the device; it’s about re-architecting entire systems to be smarter, safer, and more responsive. The NVIDIA Jetson platform provided the muscle and the toolkit to make this vision a reality.

Interested in the specifics of our multi-modal data fusion or how we built a CV pipeline for urinalysis? Those are deep dives for another article. Follow me for more technical breakdowns as we continue to build the future of accessible healthcare.

Let’s connect on LinkedIn to discuss edge AI, healthcare tech, and Jetson development.